Dinotisia Inc. (CEO Jung Moo-kyung), a company specializing in integrated AI and semiconductor solutions, announced a joint collaboration with HyperAccel (CEO Kim Joo-young), a fabless startup focused on AI semiconductor design, to develop a Retrieval-Augmented Generation (RAG) optimized AI inference system.

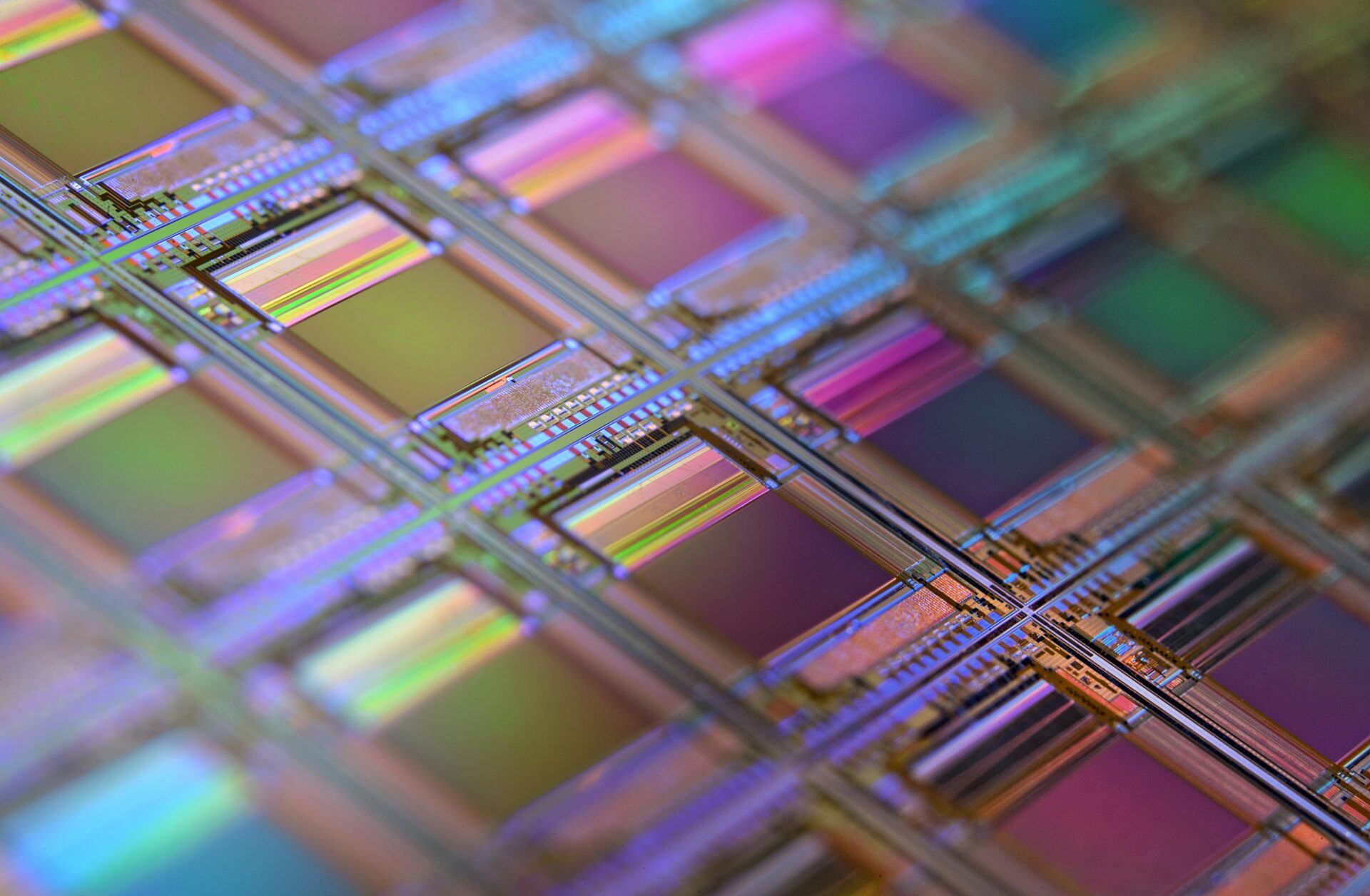

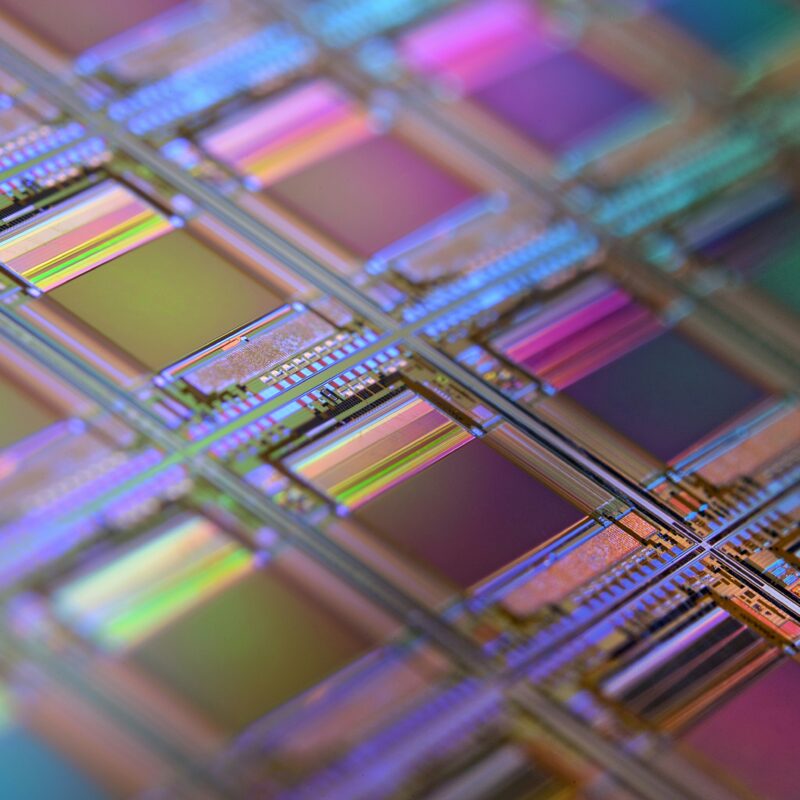

This collaboration integrates Dinotisia’s Vector Data Processing Unit (VDPU), an accelerator chip for vector database operations, with HyperAccel’s LLM Processing Unit (LPU), a specialized accelerator chip for large language models (LLMs). Together, the two chips will form a single unified system.

As the importance of fast data retrieval grows in AI services—driven by the increasing diversity and volume of multimodal data—traditional systems relying solely on software-based search and separate LLM-driven generation have suffered from latency and high power consumption. Dinotisia’s VDPU enables real-time retrieval and utilization of large-scale multimodal data, while HyperAccel’s LPU maximizes AI inference performance. By combining these two technologies, the companies aim to deliver the world’s first RAG-specialized AI system capable of handling both retrieval and inference simultaneously.

Dinotisia CEO Jung Moo-kyung stated:

“With the rapid expansion of LLM services, demand for efficient data retrieval has surged. Through this collaboration, we will introduce a new type of AI system optimized not only for inference but also for retrieval. By enabling AI to apply long-term memory, we can provide deeper user understanding and more precise personalized services. This will reduce hallucinations and mark a milestone toward more specialized, personalized AI services.”

HyperAccel CEO Kim Joo-young added:

“Eliminating bottlenecks in AI computation while achieving both performance and efficiency is the central challenge of AI semiconductors. Through this partnership, we will build an optimized AI system for both RAG and LLMs—pioneering a major shift in how AI systems are operated.”