Newsroom

Meet our latest news and insights

Featured

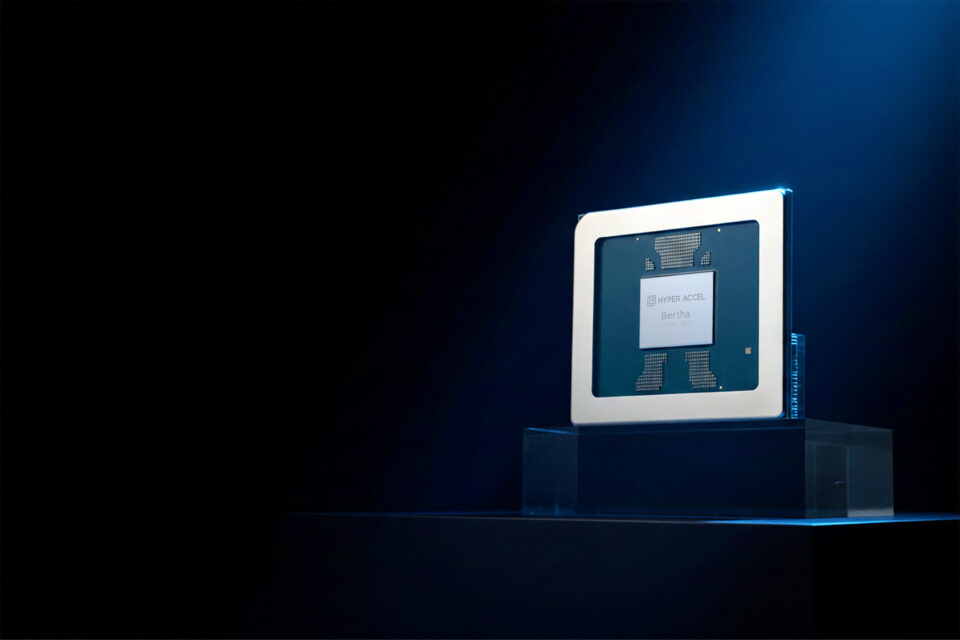

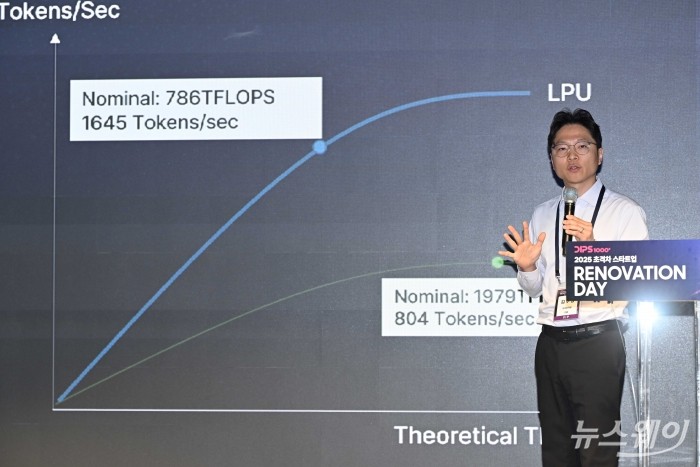

A Latency Processing Unit: A Latency-Optimized and Highly Scalable Processor for Large Language Model Inference

Published in: IEEE Micro ( Volume: 44, Issue: 6, Nov.-Dec. 2024)Authors: Seungjae Moon; Jung-Hoon Kim; Junsoo Kim; Seongmin Hong; Junseo Cha; Minsu Kim Abstract The explosive arrival of OpenAI’s...