Forte 55X

Running Generative AI at Unrivaled Speed

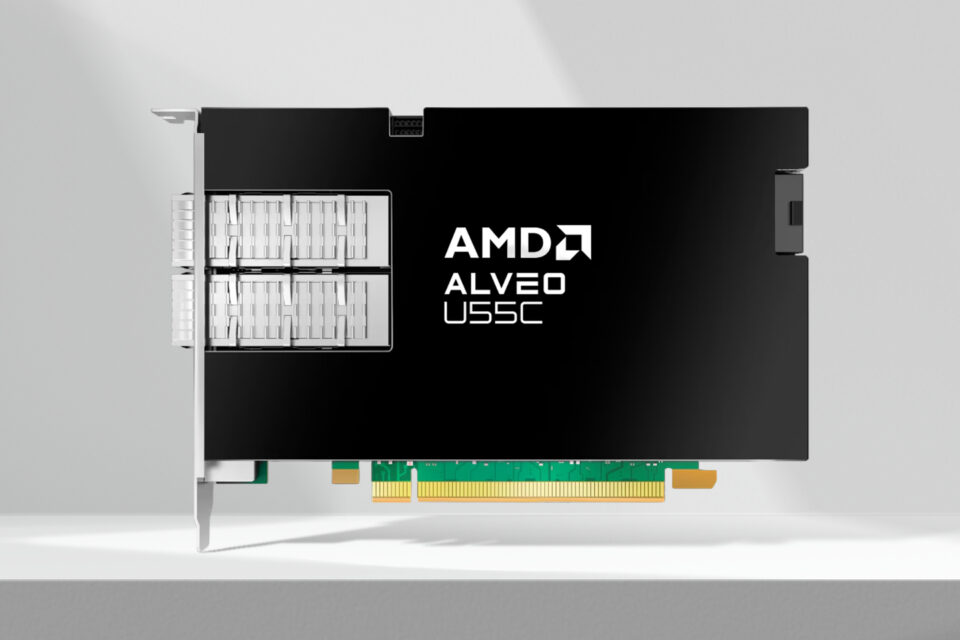

Optimized for low-latency, power-efficient AI inference by bringing together LPU technology with HBM-equipped AMD Alveo U55C high performance compute card

Enabling data privacy and customization for on-premise datacenters

Learn More

Performance

Comparison with NVIDIA L4

Throughput

Tokens/sec

x1.7 Higher throughput than the competitor

Efficiency

Tokens/sec/kW

x1.42 Higher than the competitor

Key Features

LPU-based Architecture

Streamliend memory access with precise alignment of memory bandwidth and compute bandwidth for 90% hardware utilization during inference.

SoC Integration

Based on AMD Alveo U55C FPGA for reconfigurability, power-saving, and fast time-to-market. Integration of HBM2 optimized for low-latency workload. High-speed 100Gbps Ethernet networking for superior scalability.

Multi-chip Scalability

Custom on-chip network controller for computation-communication overlapping to hide the communication overhead and achieve near-perfect scalability.

HyperDex Software

Plug & play solution for seamless serving Generative AI applications on HyperAccel hardware. Support for standardized ML frameworks for inference(e.g., PyTorch, vLLM) with SDKs for further optimizations, deployment, and profiling based on user needs.

Specifications

- Target Frequency

- 200 MHz

- Number System

- FP16

- DRAM Bandwidth

- HBM2, 460 GB/s

- DRAM Size

- 16 GB

- SRAM Size

- 2 MB

- Power Consumption

- 75 W

- Supported Models

- LLM (e.g., GPT, OPT, Llama, Claude, Phi)

- Batch Size

- 1

- Form factor

- Single slot