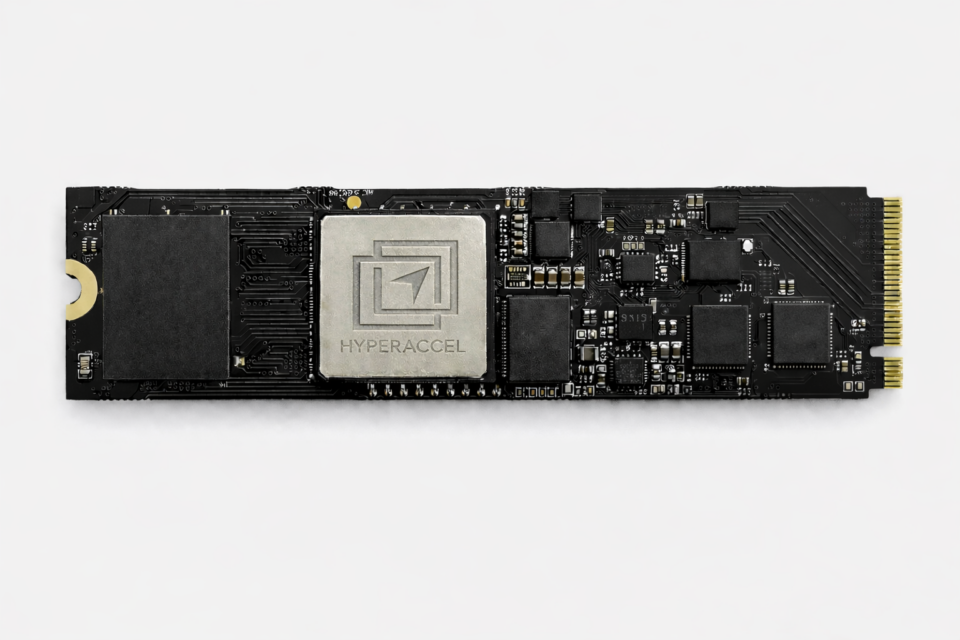

Bertha 100

Coming Q4, 2026

Pioneering Agentic AI with Versatile Edge Computing

Optimized for real-time processing of multi-modal AI inference

Real-time processing and user personalization

Key Features

LPU-based Architecture

Streamlined memory access with precise alignment of memory bandwidth and compute bandwidth for 90% hardware utilization during inference. Integration of peripheral processors to enable multi-modal computation.

Soc Integration

Advanced integration of LPU fabricated with 4nm technology node, 4 channels of LPDDR5x for state-of-the-art system-on-chip design. Offered as PCIe card or as IP to customer needs.

Multi-chip Scalability

Custom on-chip network controller for computation-communication overlapping to hide the communication overhead and achieve near-perfect scalability.

HyperDex Software

Plug & play solution for seamless serving Generative AI applications on HyperAccel hardware. Support for standardized ML frameworks for inference(e.g., PyTorch, ONNX, vLLM) with SDKs for further optimizations, deployment, and profiling based on user needs.

Specifications

- FP8

- 32.768TFLOPS

- Target Frequency

- 1.0GHz

- Number System

- BF16, FP8, FP4, INT8, INT4

- DRAM Bandwidth

- LPDDR5x, 64 GB/s

- DRAM Size

- 16GB

- Form factor

- M.2